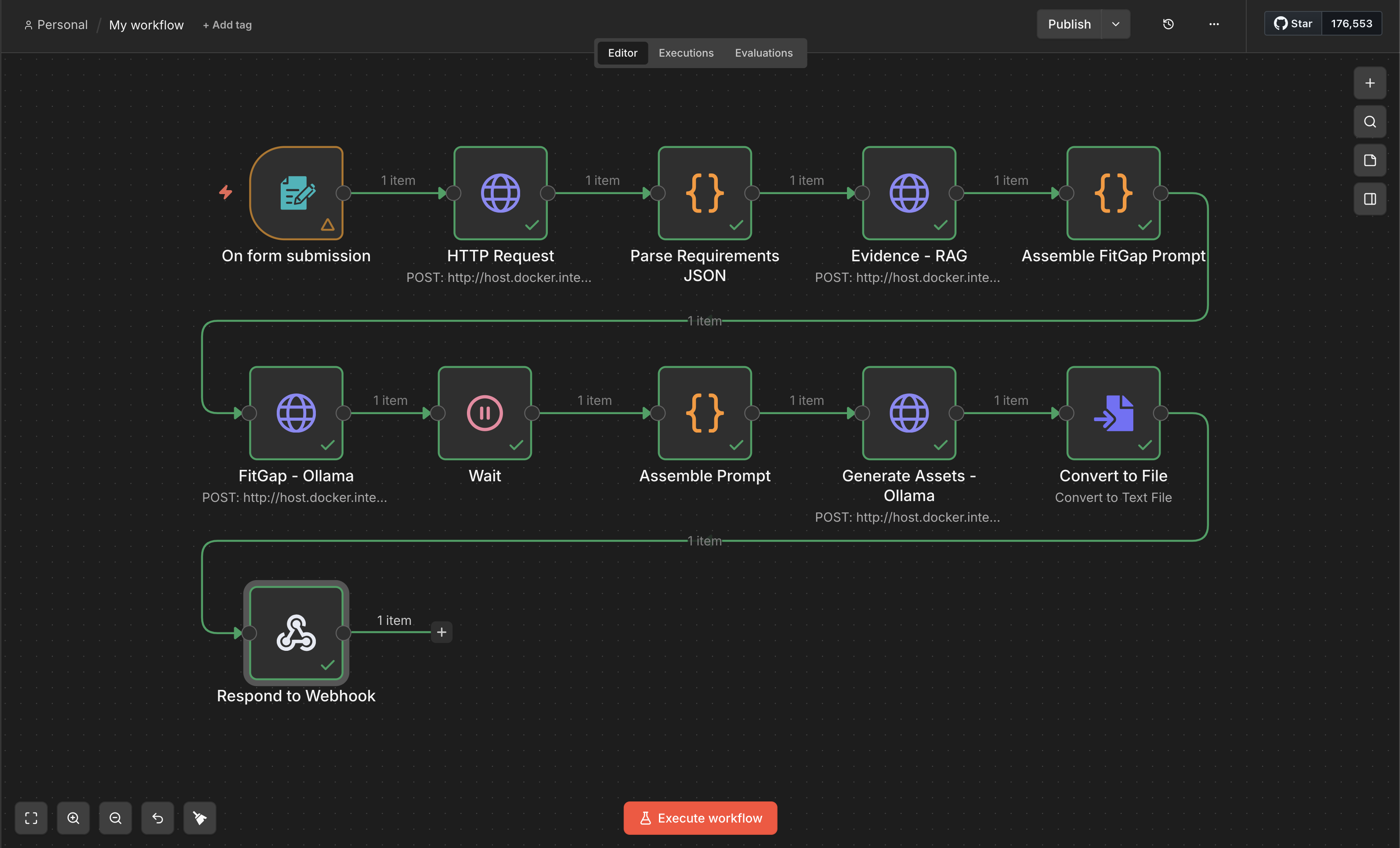

Agentic Workflow • 2026AI AgentsTool UseHuman-in-the-loopWorkflow Automation

AI PM Ops Copilot: JD → Fit-Gap → Tailored Assets (Human-in-the-loop)

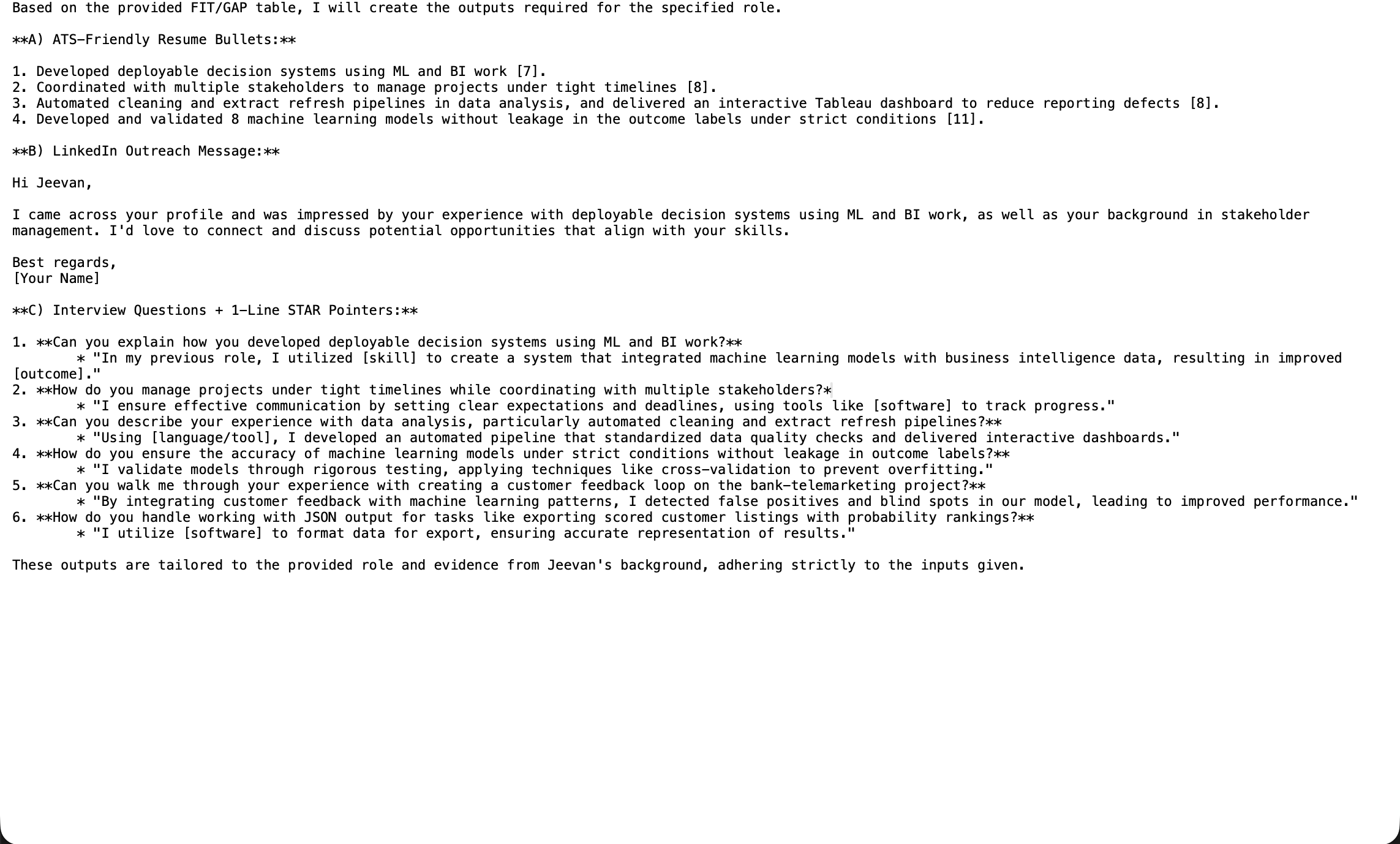

Agentic workflow that turns a job description into a fit-gap matrix, portfolio edits, outreach drafts, and interview prep — with approvals + audit logs.

Time saved / application

Target: 60–75%

User edit acceptance

Tracked per artifact

Reliability

Validation checks + refusal rules

Auditability

Run history + sources logged

Problem

Most job-application automation fails because it’s either too generic or too risky (hallucinates, misrepresents experience). The goal was a controlled agent workflow that produces high-signal assets while keeping the user in charge via approvals and evidence-backed drafting.

Approach

- Designed a tool-using workflow: parse JD → extract requirements → map evidence from resume/projects → draft assets.

- Added Human-in-the-loop checkpoints before final writing (approve/edit/regen).

- Implemented validation rules (no claims without evidence; consistent dates/titles; format checks).

- Created run history: inputs, outputs, decisions, and timestamps for auditability.

- Shipped a simple UI so users can iterate quickly and track outputs per role.

Impact

- Demonstrates real-world agent design: tool constraints, HITL approvals, logging, and reliability tradeoffs.

- Shows AI PM thinking: user journey, risks/guardrails, measurable outcomes, and iteration loops.

- Creates a practical demo that recruiters immediately understand (and you can show live).

Deployment & Monitoring

- Deployed with a lightweight UI + backend workflow runner.

- Logging: captures prompts, tool calls, and outputs for debugging and reliability.

- Monitoring: failure rate, regeneration rate, and time-to-final per artifact.

- Security note: user data stays private; supports deleting runs/artifacts.

Risks & Tradeoffs

- Over-automation risk: prevents misrepresentation by requiring evidence mapping + approvals.

- Prompt drift: outputs vary over time — stabilized with templates + validation checks.

- Tool failures: added retries + graceful fallback to manual steps.

Tools

n8n or LangGraphNext.js UILLM Tool CallingTemplatesLogging/Telemetry